How My RLM Tool Works

An LLM writes Python code to do research.

Python reads, sorts, filters, and fans out over search hits.

I give it extra functions to run cheap LLM calls and ask more questions.

An LLM writes code.

A REPL runs it.

The output comes back.

The LLM writes more code.

That loop is a Recursive Language Model (RLM).

The rest of this post unpacks that loop.

What a REPL actually is.

What functions are in scope and why.

How my harness keeps the REPL alive across turns.

How an LLM "calls a tool" through a Pi extension.

How to inspect your RLM logs to improve it.

Pi is an agent harness like Claude Code.

My implementation is a Pi extension written in TypeScript.

The REPL host is a Python subprocess.

What a REPL is

REPL stands for read-eval-print loop.

Most programmers have used one.

Loading...

Three things happen on every line:

- Read. Python reads what you typed.

- Eval. It runs the code, updating the namespace that holds your variables.

- Print. It shows you the result.

- Loop. It gives a place to write more code.

The important word is namespace.

Once you assigned x = 3, x is still 3 on the next line.

That’s because it’s stored in the namespace.

That's what separates a REPL from a script that runs once and exits.

The read-eval core fits in four lines:

Loading...

That's the heart of the REPL host.

The namespace stores variables like x from above.

It also store functions that can be used.

A real REPL also auto-prints whatever expression is on the last line.

This one doesn’t.

It only prints what is explicitly print()ed.

The LLM uses print() to send output back.

The RLM Trick

The LLM is the one writing code into the REPL.

The LLM answers a question by writing Python.

For a minimal example I could ask my RLM "What is 5x5 + 10x10?"

An (kinda stupid) RLM might solve it like this

"Hmm, let's break this down in my REPL. I need to know what 5*5 is first"

Loading...

“I also need to know what 10*10 is":

Loading...

"Great, I have all I need to answer the question. Let’s add them up":

Loading...

"Isaac, the answer is 125".

The namespace has a few extra things in it before the LLM starts, like:

context: Variable pre-loaded with lots of search hits from my knowledge base.kb_read(path): Function to read the full text of a wiki page (from my personal knowledge base).llm_query(prompt): Function to run a cheap LLM call and return the answer.llm_query_batched(prompts): Same, but runs many calls in parallel.rlm_query(prompt): Function to run another RLM queryFINAL(answer): Function to end and provide the final answer.

Here's what a typical LLM turn looks like in real text:

Loading...

The LLM emits prose plus a fenced code block.

The Pi extension (my RLM tool) extracts the code, runs it in the REPL, captures the output, and sends the output back as the next user message in the LLM's chat history.

On the next turn the LLM might do more, or call FINAL() with its answer.

High-Level Architecture

A quick vocabulary note first.

The whole thing, from user question to REPL to answer, is an investigation.

The LLM driving the REPL is the investigator. It writes the search strategy.

The cheaper LLM that llm_query calls is the analyst.

Three pieces talk to each other:

The user question is a string from your agent.

The Pi extension is the tool Pi can call.

When Pi decides to call rlm_query this code runs.

It creates the namespace, spawns Python, runs the loop, and returns a final answer.

The Python REPL subprocess is one Python process per rlm_query call.

It has a namespace dict that lives for the whole run.

The extension keeps writing code every turn.

That code stores results in the namespace.

One Turn of the Loop

The extension calls the LLM with the running chat history.

The chat history is the system prompt, the original question, and every prior turn's code and output.

The LLM responds with prose and a fenced Python code block.

The extension pulls the code out.

The code block goes to the Python REPL process (stdin).

(Remember it has the persistent namespace.)

Python writes output (stdout), error (stderr), and any FINAL back as JSON.

The extension appends that to the next message.

If FINAL was set the loop ends and returns the answer.

Otherwise the next turn starts.

The loop can also end if the LLM stops writing code, runs out of turns, or exhausts its budget.

What's in the Namespace

The extension does two things to set up the REPL.

1. Loads the context.

The extension sets a context variable in the REPL.

This has a list of search hits from my knowledge base for the user's question.

Each hit is a dict with the path, a relevance score, and a short snippet.

The LLM sees a description of the variable in the system prompt.

This is its starting point.

2. Installs the builtins.

Functions the LLM can call:

| function | what it does |

|---|---|

kb_search(query, k=5, scope='wiki') | re-query agentkb for more content |

kb_read(path) | get full text of one wiki page |

llm_query(prompt) | one cheap LLM call (string in, string out) |

llm_query_batched(prompts) | concurrent batch of calls (cap 16) |

rlm_query(prompt, context=None) | recursive RLM call |

rlm_query_batched(prompts, contexts=None) | concurrent batch (cap 4) |

FINAL(answer) | terminate with this answer |

FINAL_VAR(name) | terminate with the value of namespace variable name |

SHOW_VARS() | inspect what's in the namespace |

Plus the entire Python standard library like itertools, collections, list comprehensions, f-strings, everything.

The RLM is given another pattern called chunking.

It’s used when a page is too long to deal with.

Loading...

How the Pi Extension Talks to Python

When the LLM writes kb_search("oauth") in the REPL it runs as a normal Python function.

But kb_search doesn't do the search itself.

It sends the arguments to the Pi extension, which runs the real search and hands the answer back.

From the LLM's view nothing weird happened.

A function returned a value.

Here's what kb_search looks like on the Python side:

It sends the call to the extension via RPC (remote procedure call).

Loading...

It just gives arguments to the extension and returns what comes back.

kb_search uses agentkb, a Python library I wrote, to search my knowledge base.

My knowledge base is a very large collection of markdown files.

But if agentkb is a Python library I wrote, why not call agentkb directly from Python? Why the RPC stuff?

Three reasons.

One implementation.

I want to use kb_search by itself sometimes.

Putting it in Pi means the REPL and Pi can use the same implementation.

Change behavior once for both places.

llm_query can't really go any other way.

LLM calls need provider auth, budget tracking, and concurrency limits.

That lives in Pi's model registry.

Once llm_query and rlm_query have to round-trip, doing the same for kb_search is consistent.

Budget, logging, and limits live in one place.

The extension logs all LLM calls, searches, retries.

Running kb_search in the extension means logging is easy.

I don’t have to add new logging for Python.

But timing is tricky.

The extension sends a block of code for Python, and Python sends back the result when the whole block is done.

That's slow.

Inside that block the code might also call kb_search or llm_query several times, and each one needs its own round trip while the block is still running.

So there are two channels.

One carries the big "run this code, here's the result" exchanges.

The other carries the small "I need X" requests the Python functions make mid-run.

Recursion: What rlm_query Actually Does

rlm_query is just another builtin in the namespace.

It's the recursive part of recursive language model.

When the investigator calls rlm_query(prompt, context=subset_of_context) a new smaller investigation starts.

New query. New namespace. New REPL. New context.

If the call tree is at the recursive limit rlm_query downgrades to a plain llm_query.

Budget is shared across the tree.

A max_budget variable tracks total LM spend across all depths and parallel siblings.

If the budget is reached the investigation ends.

Children get whatever the parent has left.

Models can differ by depth.

The investigator (depth 0) runs on the smarter model.

By default that’s GPT 5.4.

Children at depth ≥ 1 and all llm_query calls run on the analyst model.

By default that's GPT 5.4 mini.

Fan-out work doesn't need the big model.

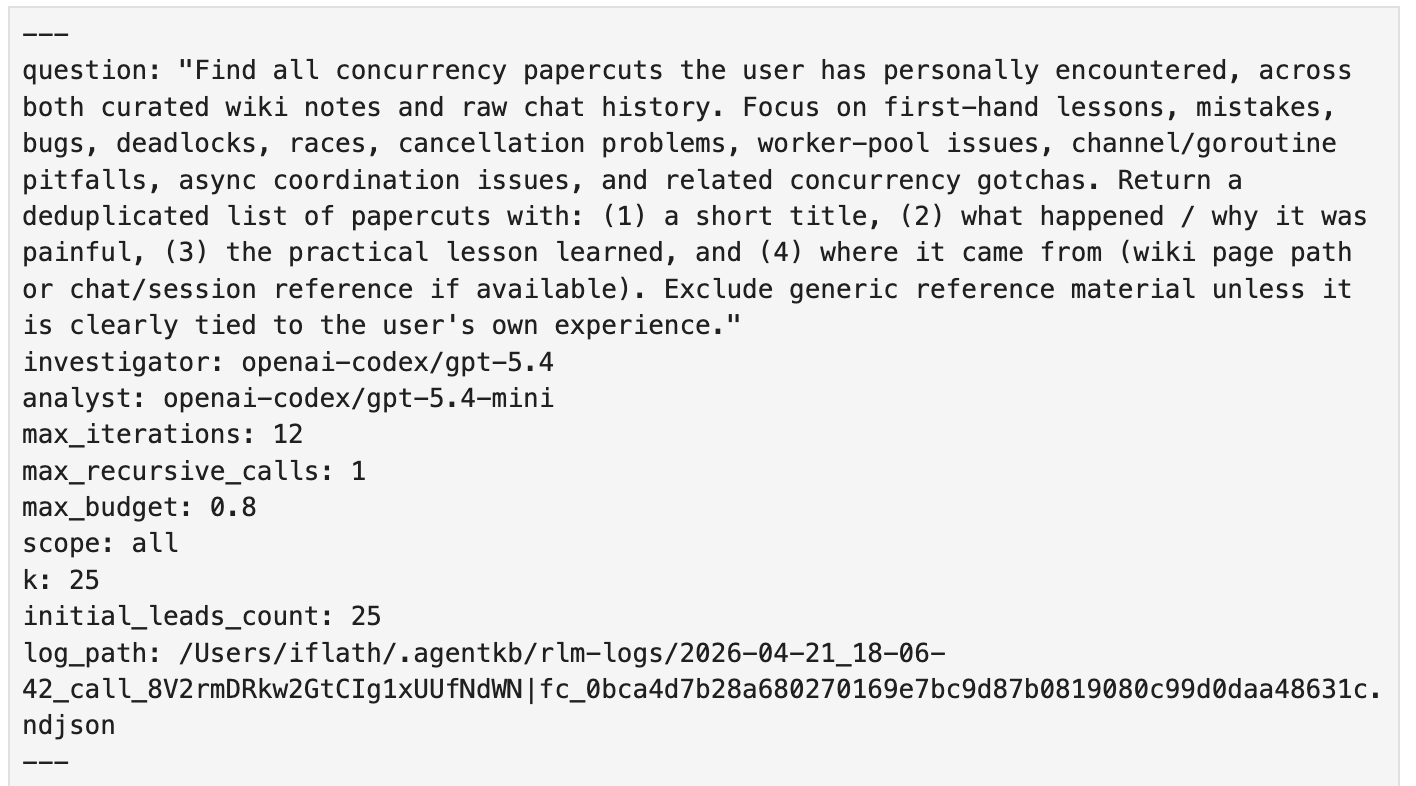

Putting It All Together

Here's the full picture of one rlm_query call.

In parallel a logger appends one NDJSON line per event to a log file.

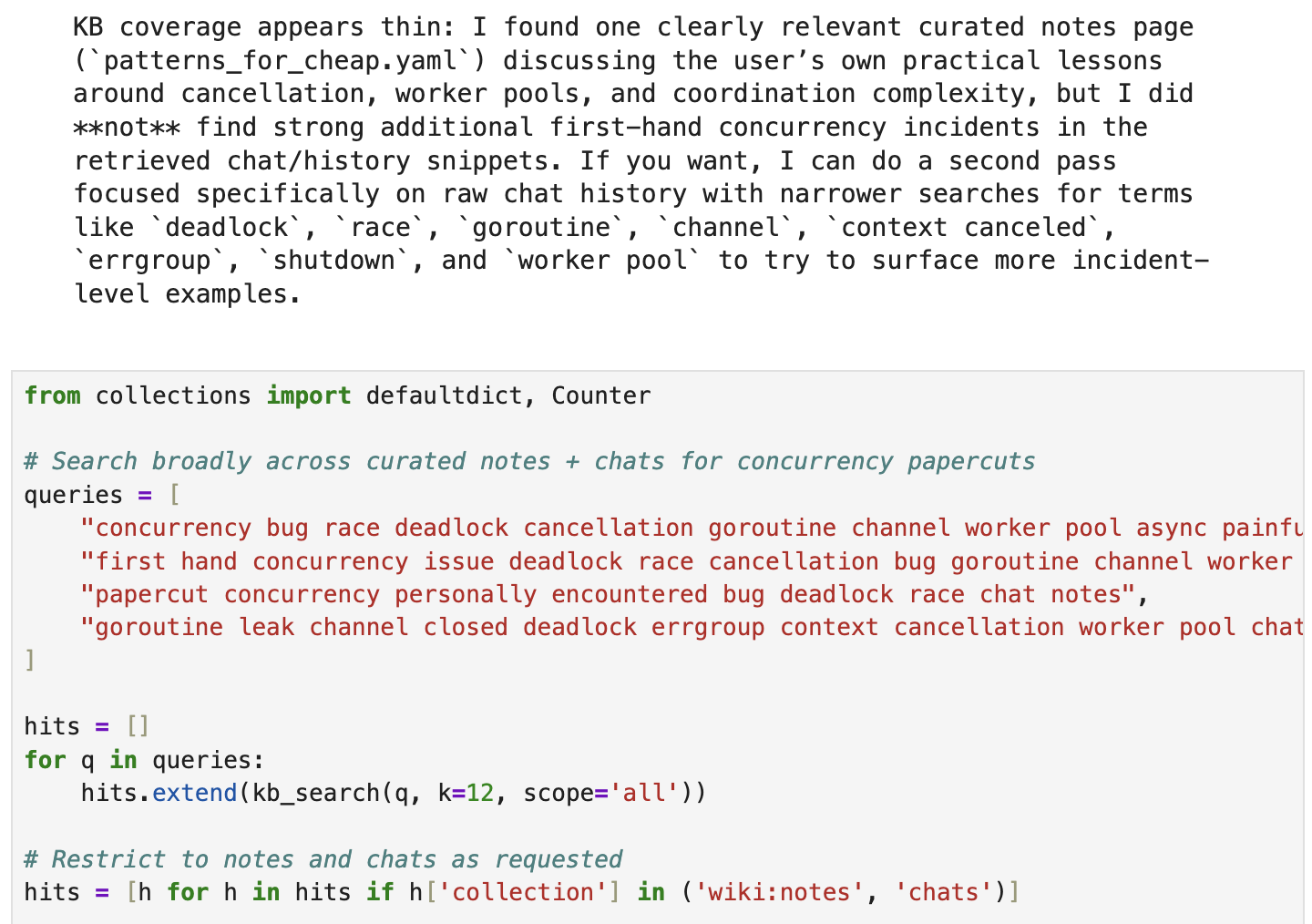

Exploring a Run in Jupyter

Every RLM call writes NDJSON to a log file while it runs.

One line per event:

Turn starts, assistant prose, code blocks, stdouts, RPC calls, final answers.

When the loop finishes a converter turns that log into a Jupyter notebook.

Every run leaves behind a .ndjson and a .ipynb.

The front-matter cell records the question, the models, the budget, and the log path.

Below that the notebook reads as a timeline.

Each investigator turn becomes a markdown cell with the prose followed by a code cell with the Python block and its result attached.

Child investigations are inlined in order.

The first cell is a bootstrap that launches a local rlm-server subprocess and installs the same kb_search, kb_read, llm_query, rlm_query, and FINAL shims the live run used.

It preloads the same context variable from the original leads.

The namespace in the notebook is what the investigator had.

That makes the notebook live, not a replay.

If you change things and run the code you get the result of the “what if”.

What does this let you do?

Prototype. Define new helpers like kb_grep or a kb_summarize and use it the way the investigator would.

Eval. Code by hand what the investigator should have done, then compare it to what it did.

Improve. Take what you learn from the comparison and change the system prompt, builtins, or initial context.

This means the traces are live experiments, not logs.

Why This Shape Works

Most tool calls are flat.

The model emits a tool call, you run it, you give it the result, repeat.

Each step is independent.

RLM's shape works for three reasons.

Richer shell.

Coding agents already do search.

They run grep, find, ripgrep.

The model reads stdout and decides what to run next.

RLM is the same idea with a more powerful shell.

Python instead of bash.

Functions instead of static binaries.

The namespace persists across turns so a search result becomes a variable you can sort, slice, fan out over, and feed back to the next search.

Less noise in the main context.

A normal agent searches by emitting tool calls into its own context.

Every hit, every page, every false lead fills the window.

The model writing your code is the same model wading through search noise.

RLM moves all of that into a separate process with a separate LLM.

The investigator can pull 50 hits, read 30 pages, throw 28 of them out, and the main agent never sees the 28.

Three good ideas stacked.

An agentic loop.

A dynamic programming language with persistent state.

Semantic search (late interaction retrieval models keep crushing benchmarks).

Each is powerful alone.

Stacking lets them handle filter, fan-out, and synthesis better.

The full original paper is at arxiv:2512.24601 (Zhang, Kraska, Khattab, MIT OASYS).

My implementation is different in places. Pipes for IPC instead of TCP. No sandboxed Python process.

But all the core ideas are theirs.

Context as a Python variable, LLM as the programmer, REPL as the runtime.